Research (Under Construction)

My research broadly concerns the security of machine learning systems, as well as the use of machine learning for security. Machine learning is becoming ubiquitous and an understanding of its vulnerabilities is of pressing concern for those deploying the system, as well as those affected by it. My work has spanned attacks on and defenses for machine learning systems, as well as the development of a fundamental understanding of their robustness.

Fundamental bounds on robustness

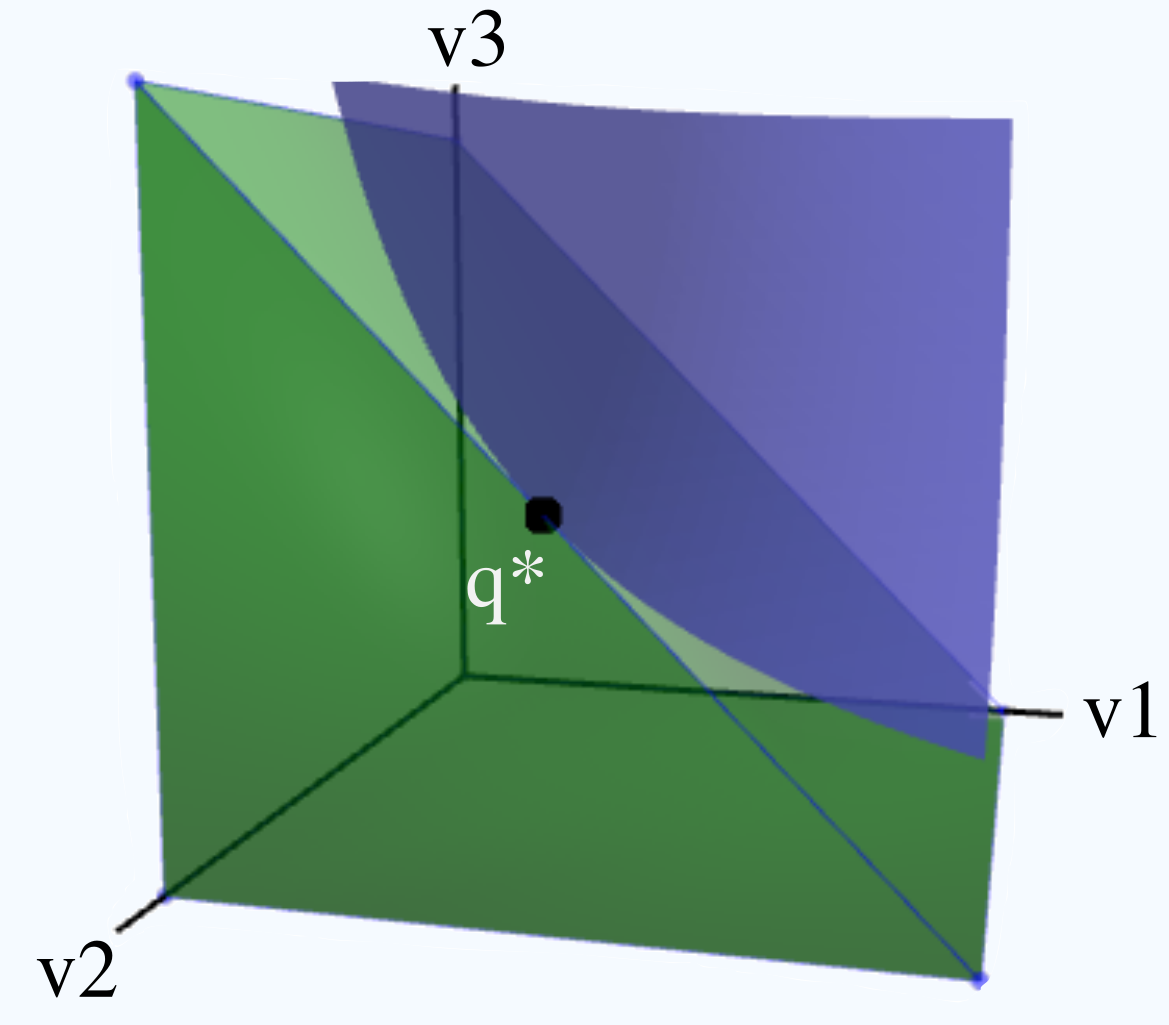

Evasion (or test-time) attacks represent worst-case perturbations to data encountered at test time by an ML system, and an immense variety of attacks and defenses have been proposed. It is thus critical to understand the fundamental limits of learning in the presence of such attackers from two standpoints: i) how is the process of learning itself impacted by the presence of such an attacker, and ii) what is the change, if any, in the lowest loss achievable by any classifier in the presence of a given attack. Our work at NeurIPS 2018 tackled the first question, demonstrating the impact of evasion attacks on the number of samples required to learn good classifiers if an evasion attacker is present. In two papers at NeurIPS 2019 and ICML 2021, we leveraged the theory of optimal transport to show the impact of test-time attacks on the minimum achievable 0-1 and cross-entropy loss, respectively.

Evasion (or test-time) attacks represent worst-case perturbations to data encountered at test time by an ML system, and an immense variety of attacks and defenses have been proposed. It is thus critical to understand the fundamental limits of learning in the presence of such attackers from two standpoints: i) how is the process of learning itself impacted by the presence of such an attacker, and ii) what is the change, if any, in the lowest loss achievable by any classifier in the presence of a given attack. Our work at NeurIPS 2018 tackled the first question, demonstrating the impact of evasion attacks on the number of samples required to learn good classifiers if an evasion attacker is present. In two papers at NeurIPS 2019 and ICML 2021, we leveraged the theory of optimal transport to show the impact of test-time attacks on the minimum achievable 0-1 and cross-entropy loss, respectively.

Practical attacks on machine learning systems

Machine learning systems are vulnerable to attacks that aim to induce misclassification of inputs, either via test-time (evasion) or training-time (poisoning) manipulation. However, most of these attacks make unrealistic assumptions about the attacker’s capabilities, such as white-box access to the internals of the ML system. Our work on black-box attacks was the first to demonstrate that attackers with only query-access to the ML system under attack could be as powerful as white-box attackers.

Machine learning systems are vulnerable to attacks that aim to induce misclassification of inputs, either via test-time (evasion) or training-time (poisoning) manipulation. However, most of these attacks make unrealistic assumptions about the attacker’s capabilities, such as white-box access to the internals of the ML system. Our work on black-box attacks was the first to demonstrate that attackers with only query-access to the ML system under attack could be as powerful as white-box attackers.

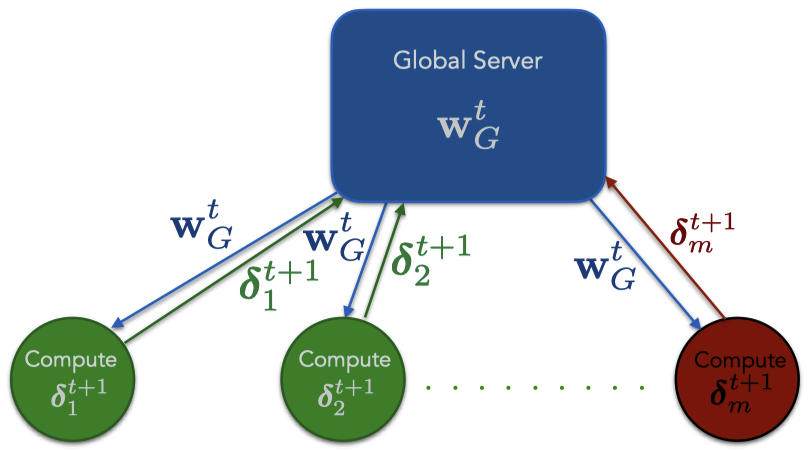

Distributed machine learning is becoming increasingly popular for its privacy and computational benefits, but has critical security vulnerabilities as demonstrated by our work on model poisoning attacks. We were the first to show that even a small fraction of compromised agents can lead to the misclassification of attacker-chosen instances at test time.

Distributed machine learning is becoming increasingly popular for its privacy and computational benefits, but has critical security vulnerabilities as demonstrated by our work on model poisoning attacks. We were the first to show that even a small fraction of compromised agents can lead to the misclassification of attacker-chosen instances at test time.

Protecting machine learning via provable and lightweight defenses

Securing machine learning systems against attacks is necessary, but security solutions are likely to be adopted only if they provide guarantees and do not impact performance significantly. We have provided solutions satisfying both these conditions against patch-based test-time attacks as well as model poisoning attacks during training.